CSV & Excel: escape from the encoding hell in NodeJS

Georges Biaux3 min read

CSV is great, especially to export or import table-like data into your system. It is plain-text and it can also be opened with Microsoft Excel and be edited by almost anyone, even nontechnical people.

But, despite all those advantages, the use of CSV that has been edited with Excel comes with a massive drawback: if your file contains non-ASCII characters, you enter what I personally call the encoding hell.

Some context

As a French company, we deal on a regular basis with non-ASCII characters like éèêàç and so on. We were developing a web application where our client needed to import data using CSV files.

The files were to be modified by nontechnical personnel using Microsoft Excel for Windows (2007 version) and we couldn’t change that. The application backend was coded using NodeJS and the Loopback framework.

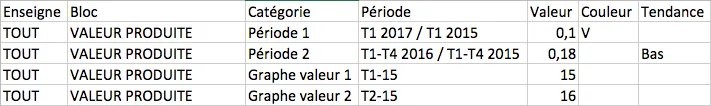

So we developed the CSV importer and data looking like this in Excel:

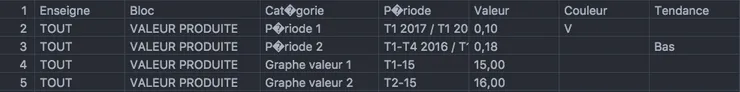

Ended up like this in the database:

Needless to say that our client was not satisfied with the presence of this character: �.

What caused this problem

After a few research, we discovered that Excel does not encode CSV files in good old UTF-8 when saving them.

Fact is that Excel for Windows encodes CSV in ISO-8859-1 and Excel for OSX in Macintosh encoding. Unfortunately, it seems that this cannot be overridden in Excel, for now, so we couldn’t ask our client to change his configuration. We had to handle this within the application.

How we solved it

iconv-lite is a great javascript library for dealing with encoding conversions. After having figured out from which encoding decode our files, we only had to add this code to our CSV importer, right before the CSV parsing:

iconv = require('iconv-lite');

...

originalFile = fs.readFileSync(filename, {encoding: 'binary'});

decodedFile = decode(originalFile, 'iso88591');

We knew that our client would only use Excel for Windows, so we didn’t bother implement an OSX compatible solution, but if you need to create a multi OS importer, you could use a trick like this:

originalFile = fs.readFileSync(filename, {encoding: 'binary'}); decodedFile = decode(originalFile, 'iso88591'); if(decodedFile.indexOf('é') < 0) { decodedFile = decode(originalFile, 'macintosh'); }

Here, we know that after decoding we should find the character “é” in the header of the CSV (in the “Catégorie” and “Période” columns). So we try first the Windows compatible decoding and if it fails (if we do not find the “é”), we try the OSX compatible one.

Conclusion

Yay! You escaped the encoding hell! If you want to learn more about CSV import in Loopback, you should certainly read this great article about user-friendly transactional CSV import.